|

4/3/2023 0 Comments Redshift wlm

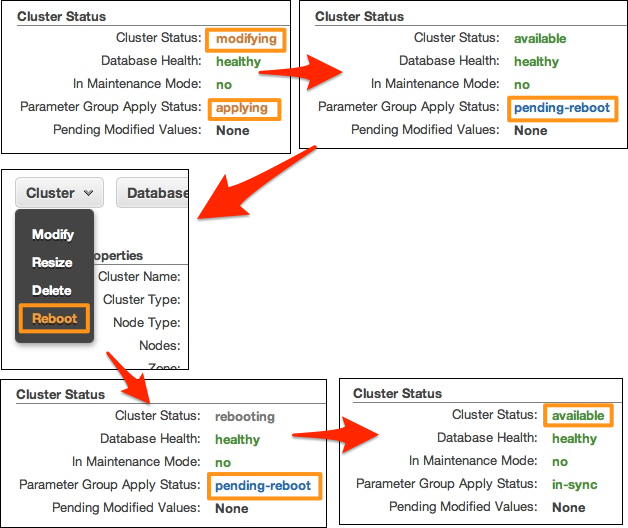

These queues have concurrency levels, meaning the number of workloads started at the same time. WLM groups these queues by a query group label defined by the user before the query execution. WLM comes to the rescue, as it creates what is called "query queues" at runtime. Then those chaotic independent microservices start to run on your Redshift clusters at the exact time that your KPIs trigger new processes on the same Redshift cluster.Those activities need to be cross-referenced with the geographical location of the last transactions.Your lead data scientist is deploying some machine learning models to detect possible fraudulent activities.With this feature on, you won't sacrifice being able to answer quick questions due to long-running processes, as it enables flexibility while managing your workloads. One of the faster ways of managing your query workflows is called workload management. Now that we know how to create the query plan, we can go deeper into query optimization -which is nothing more than refactoring your queries to lower the processing costs described by the query plan steps. > XN Merge Join DS_DIST_NONE (cost =0.00. > XN Hash Join DS_BCAST_INNER (cost =109.98. > XN Hash Join DS_BCAST_INNER (cost =112.50. > XN Hash Join DS_BCAST_INNER (cost =737.38. > XN Hash Join DS_BCAST_INNER (cost =741.94. > XN Hash Join DS_BCAST_INNER (cost =742.08. WARNING! ERRORS ENCOUNTERED DURING SQL PARSING! Here is the result of the above EXPLAIN command: So here's where Redshift tells you if what you wrote is what Redshift understood. Here's where the query plan comes in handy below, you can see all the steps Redshift executes based on the SQL you wrote. Redshift's power relies on heavy processing, so the bigger those tables are, the better for you, computationally speaking.īut how can you know that? Going deeper, how can you identify the smaller tables for you to take the necessary metrics and measures so important for your KPIs? Each one of them uses the INNER JOIN clause. Group by lastname, catname, venuename, venuecity, venuestate, eventname, month having sum(pricepaid) >9999įrom the EXPLAIN results above, I want to identify the tables: category, venue, sales, listing, date, and users. Select lastname, catname, venuename, venuecity, venuestate, eventname, month, sum(pricepaid) as buyercost, max(totalprice) as maxtotalpriceįrom category join event on category. Here's an example of an EXPLAIN Redshift command: With this execution blueprint, we can start to inspect the bottlenecks on it. Once the query is started (either by the console or by pragmatic access-and both API and CLI commands follow the same way), it generates a query plan, which is the query translation made by the parser while extracting the data from the nodes. Now let's look more in-depth at the process of querying. As for the other two major cloud providers, there's BigQuery on Google Cloud Platform and Synapse Analytics on Azure.Īnd if you're struggling with managing your data lake house with multiple clouds, you can do those tasks more easily with the help of Panoply's Redshift integration.

This kind of database is the top choice for storing data warehouses thanks to its capabilities of processing massive amounts of data in parallel. Redshift is Amazon's flavor of a massively parallel processing (or MPP) database system. What is Redshift, and how does query processing work? Query optimizations are very handy when creating your objects on your Panoply's Cloud Data Platform-increasing your transformations' speed, making it possible to explore more data with less. We'll finish with a quick conclusion of what we saw and some techniques for query optimizations. This intel will become handy as we go further down the rabbit hole and oversee the deployment of a good-though sometimes threatening-friend, workload management (or WLM) on Redshift. We'll also cover how concurrency works at a high level on Redshift. In this article, I'll present how Redshift query queues work. So our questions return accurate results as a result of extra processing power. Sometimes the systems that manage our queries can't process in the same way as we do. Even the most potent database is still prone to the terrifying error any database system can face: query bottlenecks.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed